Reinforcement Learning for POMDP: Rollout and Policy Iteration with Application to Sequential Repair

Sushmita Bhattacharya, Thomas Wheeler

advised by Stephanie Gil, Dimitri P. Bertsekas

Abstract

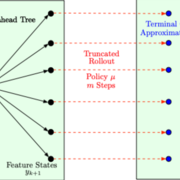

We study rollout algorithms which combine limited lookahead and terminal cost function approximation in the context of POMDP. We demonstrate their effectiveness in the context of a sequential pipeline repair problem, which also arises in other contexts of search and rescue. We provide performance bounds and empirical validation of the methodology, in both cases of a single rollout iteration, and multiple iterations with intermediate policy space approximations.